In many organizations, AI deployment is moving faster than governance maturity. As tools like Microsoft Copilot and custom Azure OpenAI solutions move from the experimental phase to core business operations, the conversation has shifted.

Governance is no longer just a policy issue for the legal department; it has become a critical management and security hurdle. Without a robust governance model, the risks are immediate and operational.

To bridge this gap, Nordic decision-makers are looking toward global standards. But with so many frameworks available, which ones actually matter for a Microsoft-oriented environment?

Top 3 Global Standards For AI Governance

Three frameworks are especially useful because they solve different parts of the governance problem. Together, they cover governance structure, risk thinking, and AI-specific security exposure. Separately, each still leaves important gaps.

NIST AI Risk Management Framework (NIST AI RMF)

NIST AI RMF is strongest as a governance and risk framework. It helps organizations build a shared language for discussing AI risk, trust, accountability, and oversight across leadership, business, and technical teams.

Its main value is strategic clarity. It helps organizations move beyond vague ideas of “responsible AI” and into a more structured discussion about what should be assessed, monitored, and owned. That makes it a strong starting point for organizations that are still building governance maturity.

Its limitation is that it stays at a higher level. It helps define what good governance should look like, but it does not tell you how to configure Purview labels, review Microsoft 365 oversharing risks, or validate connected AI applications in practice. For that reason, it is best seen as a strategic foundation, not a complete operating model.

ISO 42001

ISO 42001 is the strongest as a management system standard. It gives organizations a more formal structure for governing AI through defined roles, policies, review processes, and continuous improvement.

This makes it especially useful for organizations that need stronger management discipline, clearer accountability, and a governance model that can scale over time.

Its limitation is that it is not a technical implementation guide. It helps create governance order, but it does not by itself solve issues such as oversharing, access design, integration security, or AI-specific testing.

OWASP Top 10 for LLM Applications

OWASP Top 10 for LLM Applications is strongest as a security lens for AI-enabled systems. It focuses on the most important risks in LLM and generative AI applications, including prompt injection, insecure output handling, and broader application-layer weaknesses.

This makes it highly relevant for organizations building custom AI applications, integrating LLMs into business systems, or exposing AI through APIs and connected workflows. It helps teams test whether an AI solution is secure enough to use in practice, not just whether it is useful.

Its limitation is scope. OWASP helps with AI security, but it does not provide a full governance model for ownership, management accountability, or enterprise-wide review processes.

Nordic AI Adoption Demands Governance Beyond Global Standards

Global standards are useful, but they rarely solve the full problem for Nordic organizations. The gap appears when broad guidance meets regional regulation, Microsoft-heavy environments, and day-to-day operating complexity.

In practice, Nordic organizations need more than high-level principles. They need governance that works with local compliance expectations, Microsoft-specific controls, and clear decisions around ownership, access, oversight, and rollout. Standards help define the direction, but they do not always show how to run governance in a real operating environment.

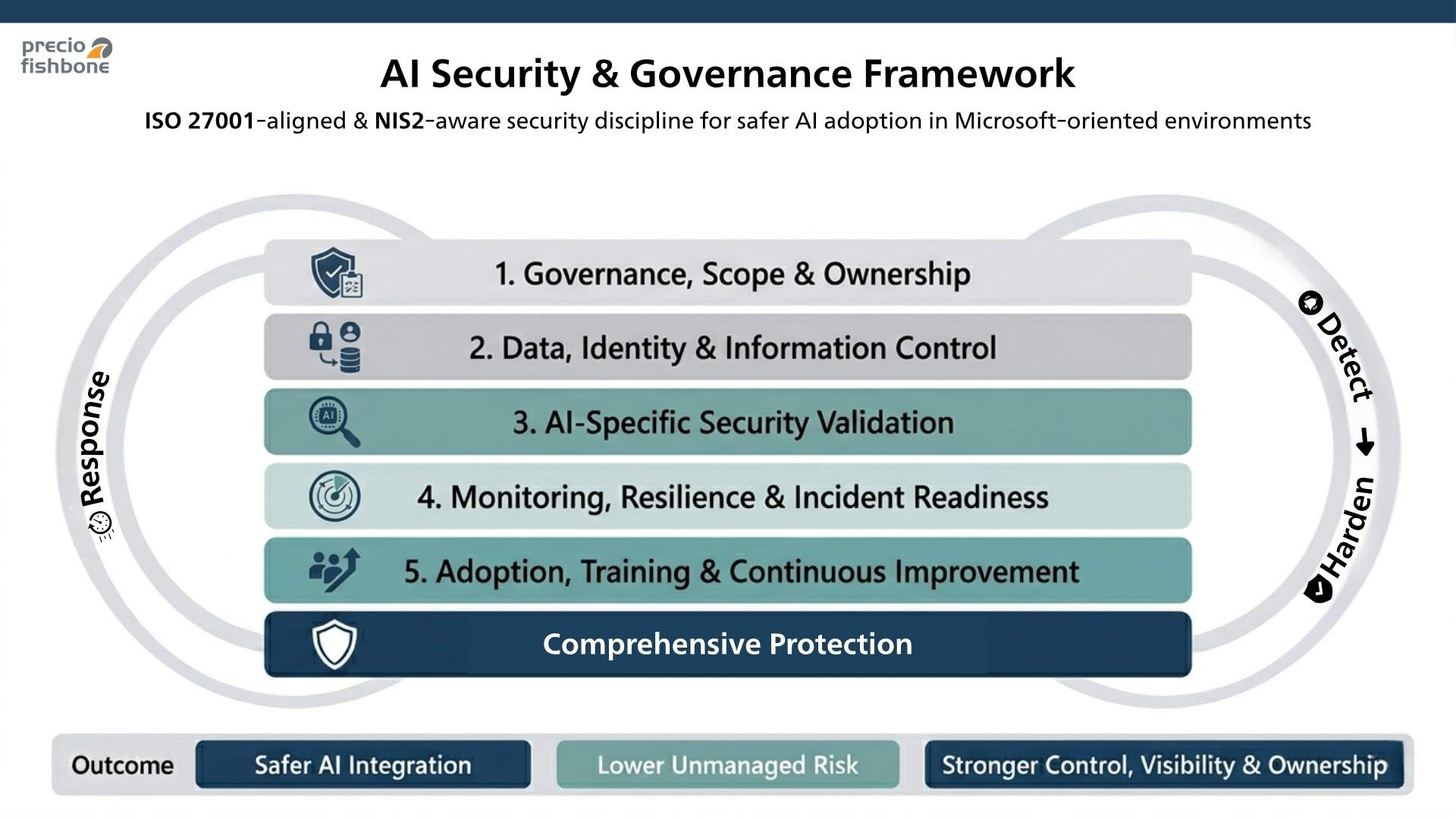

A Practical Framework For AI Governance & Security

Precio’s approach is best understood as a practical operating model, not a new formal standard. It is designed to help organizations apply AI more safely in Microsoft environments by combining governance, security, and operational control.

The model is structured in a way that aligns with ISO 27001 governance discipline while supporting practical implementation in a Zero Trust architecture mindset.

1. Governance, scope, and ownership

The first layer defines what is in scope, who owns the decision-making, and how accountability is assigned. That includes AI use cases, data boundaries, review responsibilities, and escalation points. Without this layer, AI adoption tends to drift into isolated business decisions without consistent oversight.

2. Data, identity, and information control

This layer focuses on access, classification, and protection of data used by AI. Key technologies include Microsoft Entra ID for identity and access control, and Microsoft Purview for classification, sensitivity labels, and data protection. It is also where Zero Trust principles help reduce oversharing and limit unnecessary exposure across Microsoft environments.

3. AI-specific security validation

This layer reviews whether AI-connected applications, APIs, and environments are secure enough for real use. It includes AI security audits, integration security testing, and validation of connected Microsoft and Azure-based AI solutions.

4. Monitoring, resilience, and incident readiness

This layer improves visibility and control as AI adoption grows. It focuses on monitoring, traceability, and incident readiness, supported by tools such as Microsoft Defender, Microsoft Sentinel, and Microsoft 365 security controls. The objective is not only to prevent problems, but to make AI activity easier to understand, review, and respond to when something unexpected happens.

5. Adoption, training, and continuous improvement

This layer helps organizations make governance sustainable over time. It focuses on safe adoption, targeted training, review cycles, and continuous improvement so teams can use AI with stronger control and lower unmanaged risk.

A Clearer Path to Secure AI Adoption

Global standards for AI governance provide an important foundation, but they do not fully solve the operational challenge of AI adoption. Organizations still need a practical way to turn governance into clear ownership, stronger controls, and safer rollout decisions.

If your organization is defining how to govern AI without slowing innovation, Precio Fishbone can help translate governance expectations into practical controls for real AI services.

Explore our AI offeringFrequently Asked Questions

Is the EU AI Act one of the main AI governance frameworks organizations should start with?

Not in the same way as NIST AI RMF, ISO 42001, or OWASP. The AI Act is a regulatory framework, while those three are more useful as practical tools for risk management, governance structure, and AI-specific security work.

Is ISO 42001 enough for Microsoft 365 and Copilot governance?

Usually not on its own. It is strong for management discipline and accountability, but organizations still need practical controls for access, classification, oversharing, monitoring, and connected applications.

How is AI governance different from AI security?

AI governance defines ownership, review, accountability, and decision-making. AI security focuses on protecting systems, data, integrations, and users from technical risk. In practice, organizations need both working together.

Why do Nordic organizations need more than a global framework?

Because they often need to apply governance in environments shaped by Microsoft controls, connected business systems, and a regulatory context that places strong emphasis on accountability, information control, and structured security work.

What should an organization assess before scaling AI across teams?

Start with data exposure, access rights, classification, ownership, connected apps, monitoring capability, and review processes. If those foundations are weak, AI rollout usually moves faster than governance can safely support.