Microsoft’s Security Platform Vision

Microsoft talks about “Frontier firms” where human ambition stays at the center, and fleets of agents help tackle everything from cyber threats to sustainability and operations.

But none of that matters if leaders cannot answer a simple question from the board, “Are we actually in control of our AI usage and risk?”

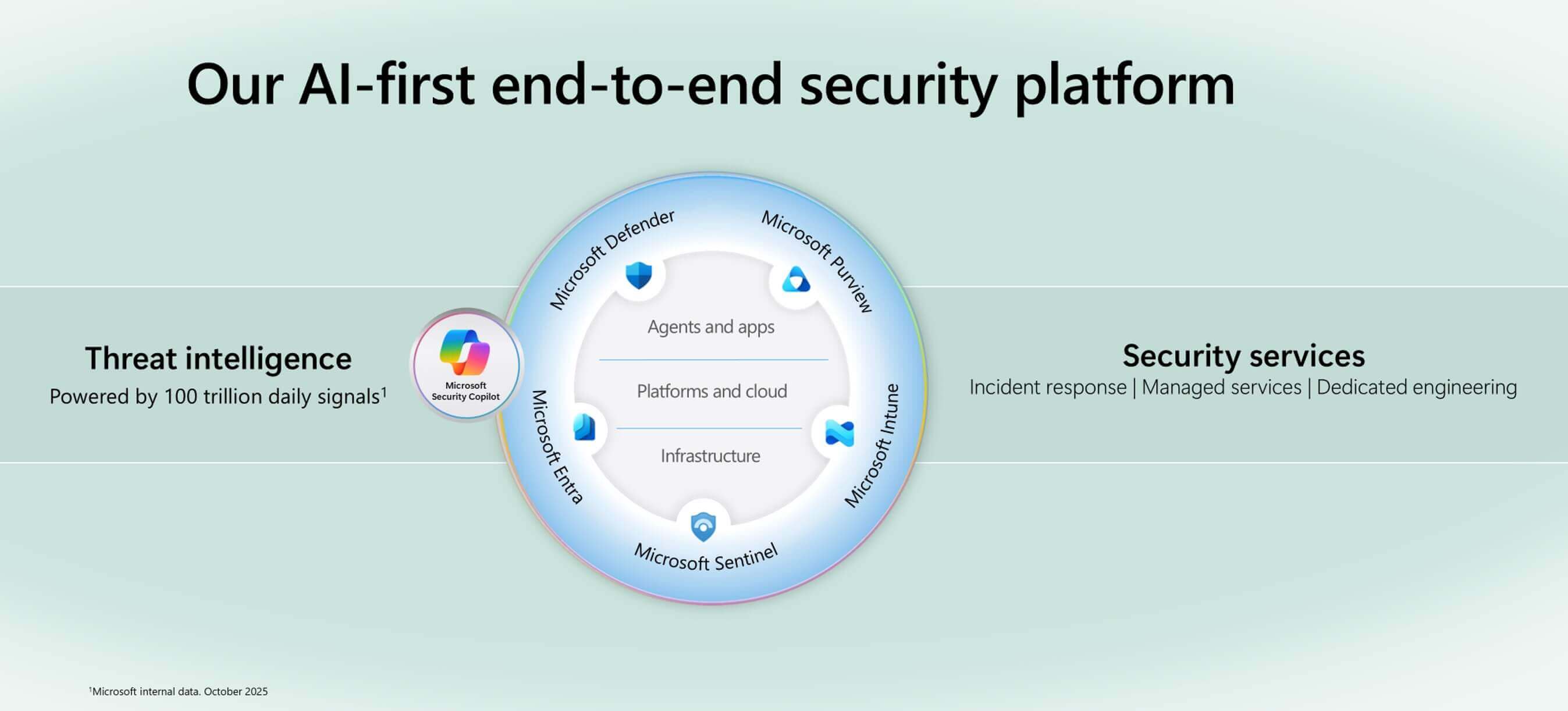

That is why Microsoft frames security as the core primitive of this new era. The vision is an AI-first, end-to-end security platform that feels ambient and autonomous, just like the agents it protects.

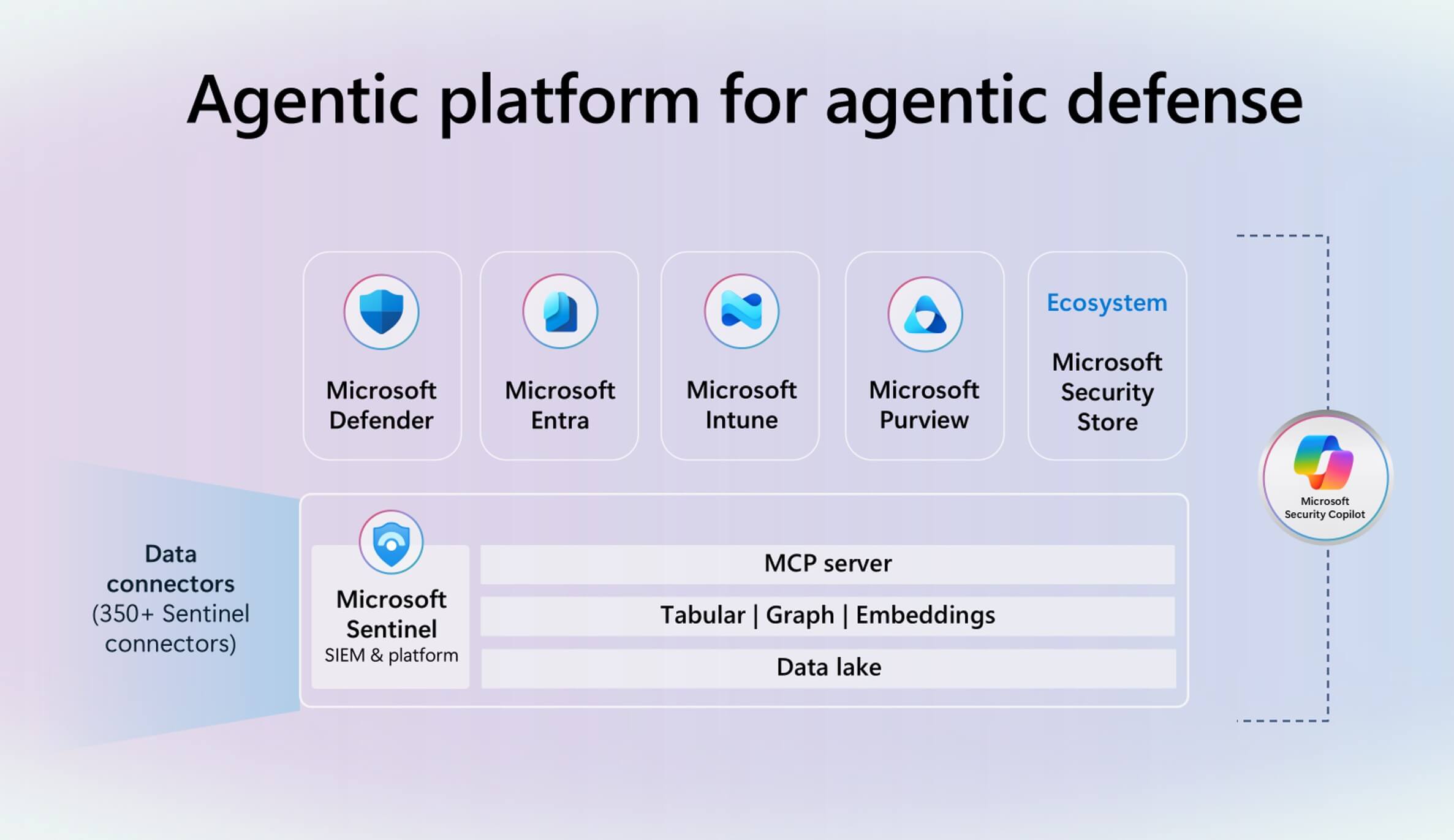

Identity, data protection, compliance, threat defense, and observability all come together across Defender, Entra, Purview, Intune, Sentinel, and Security Copilot so security teams can see AI risk in one place and act faster than attackers.

Securing AI Agents & Apps

Agent 365: a control plane for AI agents

Many AI agents arrive in environments without formal approval or central visibility. This is often called “shadow AI”. The risk is obvious: sensitive data flowing into these agents, over-permissioned automation, and no consistent way to apply policy.

Agent 365 gives you a single control plane for AI agents: a registry and inventory of AI agents across the organization, risk-based access control through Entra, and graph views that show how agents, people, and data connect over time.

For CISOs, Agent 365 makes it easier to treat agents more like a distinct class of identity and workload with owners, sponsors, and policies. We explore this theme in more depth in our Agent 365 breakdown blog.

Data and identity guardrails for agents

As organizations start to deploy larger numbers of AI agents in production, today's challenge is “how do we make sure every new agent, app, and Copilot scenario is secure by design?”

At Ignite 2025, Microsoft pushed this idea by extending its security fabric into the development experience with Foundry Control Plane. In Microsoft Foundry, teams now get a single place to build, run, and secure entire fleets of agents.

Defender, Entra, and Purview are wired directly into this experience. Developers and security teams work from the same policies and real-time risk insights, from the first line of code through to production.

On the data side, Microsoft Purview is expanding its controls for Microsoft 365 Copilot. Admins now get richer oversharing reports in the Microsoft 365 admin center, automated bulk cleanup of overshared links, and data loss prevention that covers Copilot responses and chat prompts.

Organizations can also set automatic deletion schedules for Teams transcripts that contain sensitive information and apply tighter options to exclude sensitive files from Copilot processing in government cloud environments.

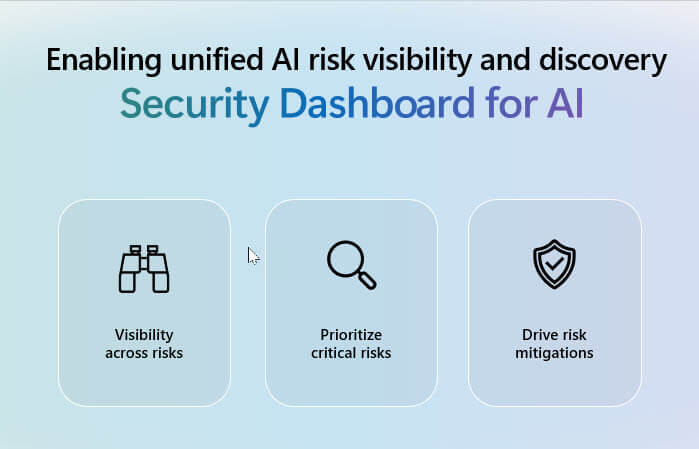

Security dashboard for AI: one view of AI risk

To help leaders see the bigger picture, Microsoft is also introducing a Security dashboard for AI. This gives a unified view of AI inventory, risk posture, and recommended guardrails across apps, agents, models, MCPs, and data.

Signals from Entra, Purview and Defender are aggregated so CISOs can see identity risk, data security issues and cloud misconfiguration in one place, rather than in separate consoles.

Securing Platforms & Cloud

Microsoft Defender and GitHub Advanced Security

At Ignite 2025, Microsoft pushed security further “left” into the development lifecycle by wiring Microsoft Defender directly into GitHub Advanced Security

Instead of waiting for issues to show up in production or a SIEM, from a GitHub project, developers can view security findings that are enriched by Defender and understand, in real time, which issues that actually matter for their organization.

With Defender in the loop, those findings are prioritized so teams can focus on the most important, high-impact issues instead of hundreds of low-value warnings.

The last piece is AI-powered fixes. Using GitHub Autofix together with GitHub Copilot, developers can move from “there is a problem” to “here is a proposed patch” in a single workflow.

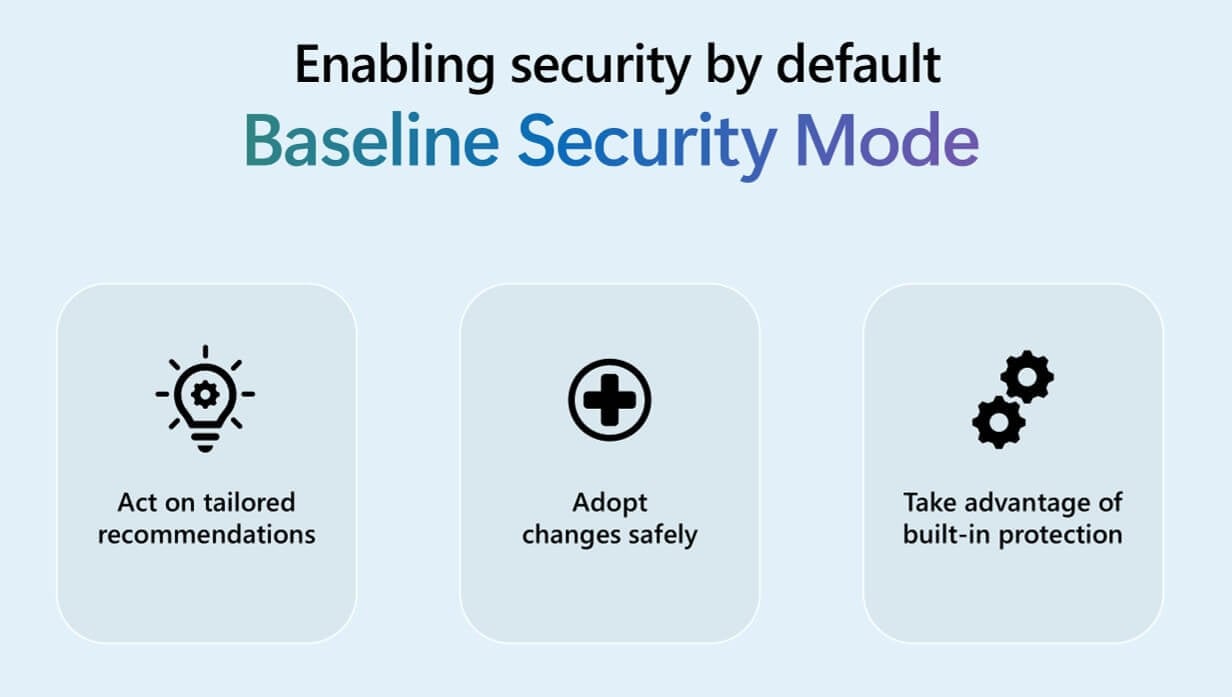

Microsoft Baseline Security Mode

The second platform update is Microsoft Baseline Security Mode, now generally available, which essentially packages Microsoft’s security research and recommended configurations into preconfigured, secure-by-default policies.

Baseline Security Mode lets admins apply Microsoft's research-backed recommendations with secure-by-default settings like strong MFA, safer identity configurations, and device compliance; no manual tuning required.

Because it pulls together identity, device, and threat protections you already own, Baseline Security Mode helps you take advantage of built-in protection in Microsoft 365, Entra, and Defender, raising your overall security posture with far less manual configuration.

Securing with Agentic AI

The third pillar of Ignite’s security story is about putting agents on the defender’s side as well as the attacker’s.

Scott Woodgate explained how Microsoft Sentinel has evolved from “just a SIEM” into a platform for agentic defense. Recent waves of innovation have introduced a high-volume security data lake, a graph that maps relationships between entities and an MCP server that lets agents interact directly with the platform.

On top of this, Microsoft is rolling out features like predictive shielding in Defender. When one device is attacked, Sentinel’s graph helps identify other devices that are close in the attack path.

The platform can then proactively harden those devices, for example by changing boot protections or blocking access from a compromised account, before the attacker reaches them.

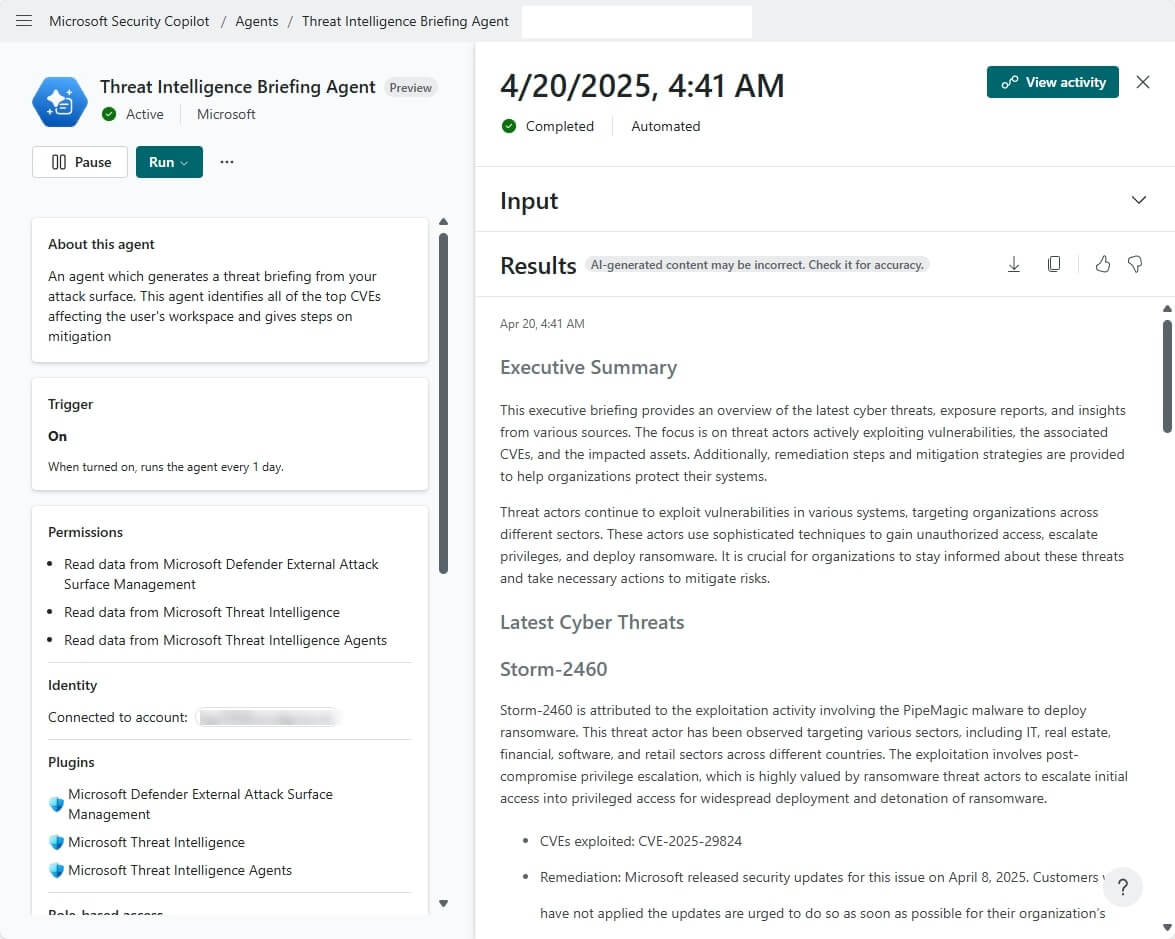

On top of that platform, Microsoft is rolling out a growing family of security agents that live inside the workflows security teams already use. Threat Intelligence Briefing Agents, Threat Hunting Agents, Data Security Triage Agents, and others.

All of this is orchestrated through Security Copilot and plugged into Defender, Entra, Purview, Intune, Sentinel, and the new AI security dashboard, which gives leaders a clear map of their AI apps, agents, MCP servers, and associated risk.

What This All Means for Security & It Leaders

Microsoft’s security story at Ignite 2025 is really about one thing: turning AI from a risky experiment into something you actually can run, measure, and defend at scale.

If you are planning your next steps with Copilot, Foundry, or AI agents, this is the moment to treat security as a platform decision, not a bolt-on. Contact us to map your AI security roadmap, identify quick wins, and design agentic defenses that match your real risk and governance needs.